合 一步一步在RHEL6.5+VMware Workstation上搭建 Oracle 12cR1的rac集群环境详细教程

Tags: Oracle高可用rac集群部署VMware WorkStationRHEL6.512cR1

- 操作系统配置部分

- 安装主机或虚拟机

- 修改主机名

- 网络配置

- 添加虚拟网卡

- 配置IP地址

- 关闭防火墙

- 禁用selinux

- 修改/etc/hosts文件

- 配置NOZEROCONF

- 硬件要求

- 内存

- Swap空间

- /tmp空间

- Oracle安装将占用的磁盘空间

- 添加组和用户

- 添加oracle和grid用户

- 创建安装目录

- 配置grid和oracle用户的环境变量文件

- 配置root用户的环境变量

- 软件包的检查

- 配置本地yum源

- 安装缺失的包

- 关闭不需要的服务

- 配置内核参数

- 操作系统版本

- 关闭Transparent Huge Pages(THP)

- 修改/etc/sysctl.conf文件

- 修改/etc/security/limits.conf文件

- 修改/etc/pam.d/login文件

- 修改/etc/profile文件

- 配置/dev/shm大小

- 配置NTP

操作系统配置部分

安装主机或虚拟机

安装步骤略。安装一台虚拟机,然后复制改名,如下:

也可以下载小麦苗已经安装好的虚拟机环境。

修改主机名

修改2个节点的主机名为raclhr-12cR1-N1和raclhr-12cR1-N2:

1 2 3 4 | vi /etc/sysconfig/network HOSTNAME=raclhr-12cR1-N1 hostname raclhr-12cR1-N1 |

网络配置

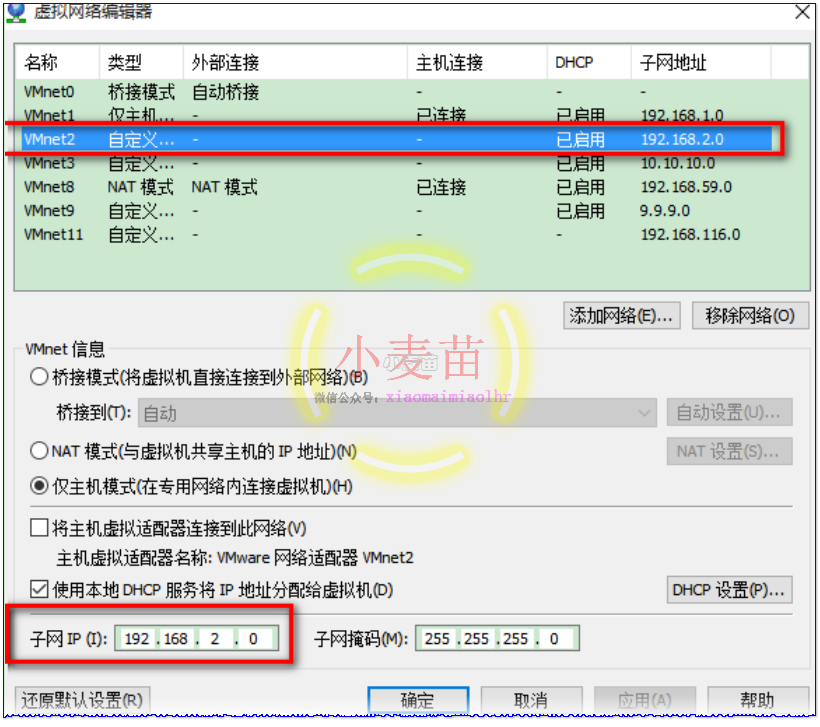

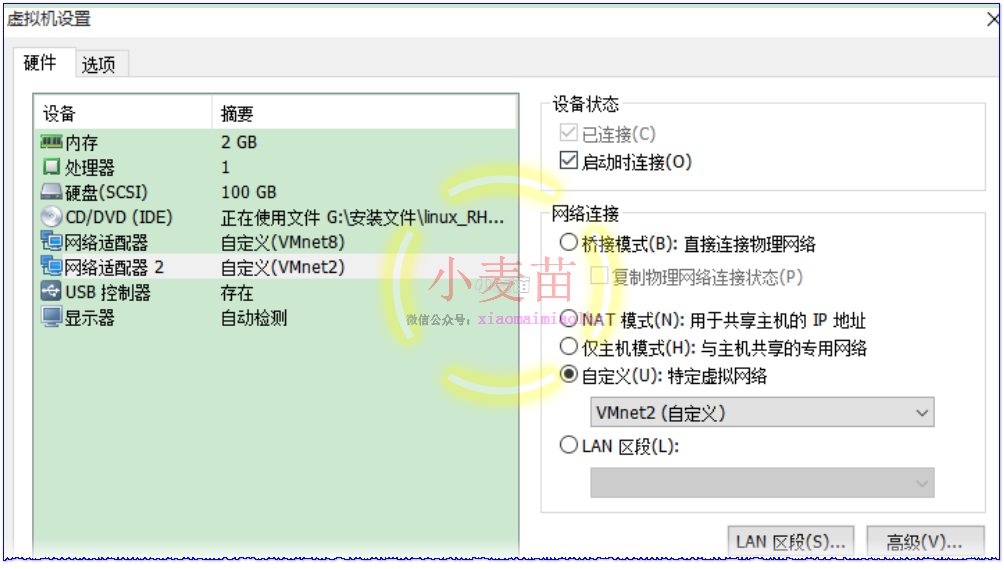

添加虚拟网卡

添加2块网卡,VMnet8为公有网卡,VMnet2位私有网卡,如下所示:

配置IP地址

1 2 3 4 | chkconfig NetworkManager off chkconfig network on service NetworkManager stop service network start |

在2个节点上分别执行如下的操作,在节点2上配置IP的时候注意将IP地址修改掉。

第一步,配置公网和私网的IP地址:

配置公网:vi /etc/sysconfig/network-scripts/ifcfg-eth0

1 2 3 4 5 6 7 8 9 10 11 | DEVICE=eth0 IPADDR=192.168.59.160 NETMASK=255.255.255.0 NETWORK=192.168.59.0 BROADCAST=192.168.59.255 GATEWAY=192.168.59.2 ONBOOT=yes USERCTL=no BOOTPROTO=static TYPE=Ethernet IPV6INIT=no |

配置私网:vi /etc/sysconfig/network-scripts/ifcfg-eth1

1 2 3 4 5 6 7 8 9 10 11 | DEVICE=eth1 IPADDR=192.168.2.100 NETMASK=255.255.255.0 NETWORK=192.168.2.0 BROADCAST=192.168.2.255 GATEWAY=192.168.2.1 ONBOOT=yes USERCTL=no BOOTPROTO=static TYPE=Ethernet IPV6INIT=no |

第二步,将/etc/udev/rules.d/70-persistent-net.rules中的内容清空,

第三步,重启主机。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 | [root@raclhr-12cR1-N1 ~]# ip a 1: lo: <LOOPBACK,UP,LOWER_UP> mtu 16436 qdisc noqueue state UNKNOWN link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00 inet 127.0.0.1/8 scope host lo inet6 ::1/128 scope host valid_lft forever preferred_lft forever 2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UNKNOWN qlen 1000 link/ether 00:0c:29:d9:43:a7 brd ff:ff:ff:ff:ff:ff inet 192.168.59.160/24 brd 192.168.59.255 scope global eth0 inet6 fe80::20c:29ff:fed9:43a7/64 scope link valid_lft forever preferred_lft forever 3: eth1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UNKNOWN qlen 1000 link/ether 00:0c:29:d9:43:b1 brd ff:ff:ff:ff:ff:ff inet 192.168.2.100/24 brd 192.168.2.255 scope global eth1 inet6 fe80::20c:29ff:fed9:43b1/64 scope link valid_lft forever preferred_lft forever 4: virbr0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UNKNOWN link/ether 52:54:00:68:da:bb brd ff:ff:ff:ff:ff:ff inet 192.168.122.1/24 brd 192.168.122.255 scope global virbr0 5: virbr0-nic: <BROADCAST,MULTICAST> mtu 1500 qdisc noop state DOWN qlen 500 link/ether 52:54:00:68:da:bb brd ff:ff:ff:ff:ff:ff |

关闭防火墙

在2个节点上分别执行如下语句:

1 2 3 4 5 6 | service iptables stop service ip6tables stop chkconfig iptables off chkconfig ip6tables off chkconfig iptables --list |

chkconfig iptables off ---永久

service iptables stop ---临时

/etc/init.d/iptables status ----会得到一系列信息,说明防火墙开着。

/etc/rc.d/init.d/iptables stop ----------关闭防火墙

1 2 | LANG=en_US setup ----------图形界面 |

禁用selinux

修改/etc/selinux/config

编辑文本中的SELINUX=enforcing为SELINUX=disabled

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 | [root@raclhr-12cR1-N1 ~]# more /etc/selinux/config # This file controls the state of SELinux on the system. # SELINUX= can take one of these three values: # enforcing - SELinux security policy is enforced. # permissive - SELinux prints warnings instead of enforcing. # disabled - No SELinux policy is loaded. SELINUX=disabled # SELINUXTYPE= can take one of these two values: # targeted - Targeted processes are protected, # mls - Multi Level Security protection. SELINUXTYPE=targeted [root@raclhr-12cR1-N1 ~]# 临时关闭(不用重启机器): setenforce 0 |

查看SELinux状态:

1、/usr/sbin/sestatus -v ##如果SELinux status参数为enabled即为开启状态

SELinux status: enabled

- getenforce ##也可以用这个命令检查

1 2 3 4 5 6 7 | [root@raclhr-12cR1-N1 ~] /usr/sbin/sestatus -v SELinux status: disabled [root@raclhr-12cR1-N1 ~] getenforce Disabled [root@raclhr-12cR1-N1 ~] |

修改/etc/hosts文件

2个节点均配置相同,如下:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 | [root@raclhr-12cR1-N2 ~]# more /etc/hosts # Do not remove the following line, or various programs # that require network functionality will fail. 127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4 ::1 localhost localhost.localdomain localhost6 localhost6.localdomain6 #Public IP 192.168.59.160 raclhr-12cR1-N1 192.168.59.161 raclhr-12cR1-N2 #Private IP 192.168.2.100 raclhr-12cR1-N1-priv 192.168.2.101 raclhr-12cR1-N2-priv #Virtual IP 192.168.59.162 raclhr-12cR1-N1-vip 192.168.59.163 raclhr-12cR1-N2-vip #Scan IP 192.168.59.164 raclhr-12cR1-scan [root@raclhr-12cR1-N2 ~]# [root@raclhr-12cR1-N1 ~]# ping raclhr-12cR1-N1 PING raclhr-12cR1-N1 (192.168.59.160) 56(84) bytes of data. 64 bytes from raclhr-12cR1-N1 (192.168.59.160): icmp_seq=1 ttl=64 time=0.018 ms 64 bytes from raclhr-12cR1-N1 (192.168.59.160): icmp_seq=2 ttl=64 time=0.052 ms ^C --- raclhr-12cR1-N1 ping statistics --- 2 packets transmitted, 2 received, 0% packet loss, time 1573ms rtt min/avg/max/mdev = 0.018/0.035/0.052/0.017 ms [root@raclhr-12cR1-N1 ~]# ping raclhr-12cR1-N2 PING raclhr-12cR1-N2 (192.168.59.161) 56(84) bytes of data. 64 bytes from raclhr-12cR1-N2 (192.168.59.161): icmp_seq=1 ttl=64 time=1.07 ms 64 bytes from raclhr-12cR1-N2 (192.168.59.161): icmp_seq=2 ttl=64 time=0.674 ms ^C --- raclhr-12cR1-N2 ping statistics --- 2 packets transmitted, 2 received, 0% packet loss, time 1543ms rtt min/avg/max/mdev = 0.674/0.876/1.079/0.204 ms [root@raclhr-12cR1-N1 ~]# ping raclhr-12cR1-N1-priv PING raclhr-12cR1-N1-priv (192.168.2.100) 56(84) bytes of data. 64 bytes from raclhr-12cR1-N1-priv (192.168.2.100): icmp_seq=1 ttl=64 time=0.015 ms 64 bytes from raclhr-12cR1-N1-priv (192.168.2.100): icmp_seq=2 ttl=64 time=0.056 ms ^C --- raclhr-12cR1-N1-priv ping statistics --- 2 packets transmitted, 2 received, 0% packet loss, time 1297ms rtt min/avg/max/mdev = 0.015/0.035/0.056/0.021 ms [root@raclhr-12cR1-N1 ~]# ping raclhr-12cR1-N2-priv PING raclhr-12cR1-N2-priv (192.168.2.101) 56(84) bytes of data. 64 bytes from raclhr-12cR1-N2-priv (192.168.2.101): icmp_seq=1 ttl=64 time=1.10 ms 64 bytes from raclhr-12cR1-N2-priv (192.168.2.101): icmp_seq=2 ttl=64 time=0.364 ms ^C --- raclhr-12cR1-N2-priv ping statistics --- 2 packets transmitted, 2 received, 0% packet loss, time 1421ms rtt min/avg/max/mdev = 0.364/0.733/1.102/0.369 ms [root@raclhr-12cR1-N1 ~]# ping raclhr-12cR1-N1-vip PING raclhr-12cR1-N1-vip (192.168.59.162) 56(84) bytes of data. From raclhr-12cR1-N1 (192.168.59.160) icmp_seq=2 Destination Host Unreachable From raclhr-12cR1-N1 (192.168.59.160) icmp_seq=3 Destination Host Unreachable From raclhr-12cR1-N1 (192.168.59.160) icmp_seq=4 Destination Host Unreachable ^C --- raclhr-12cR1-N1-vip ping statistics --- 4 packets transmitted, 0 received, +3 errors, 100% packet loss, time 3901ms pipe 3 [root@raclhr-12cR1-N1 ~]# ping raclhr-12cR1-N2-vip PING raclhr-12cR1-N2-vip (192.168.59.163) 56(84) bytes of data. From raclhr-12cR1-N1 (192.168.59.160) icmp_seq=1 Destination Host Unreachable From raclhr-12cR1-N1 (192.168.59.160) icmp_seq=2 Destination Host Unreachable From raclhr-12cR1-N1 (192.168.59.160) icmp_seq=3 Destination Host Unreachable ^C --- raclhr-12cR1-N2-vip ping statistics --- 5 packets transmitted, 0 received, +3 errors, 100% packet loss, time 4026ms pipe 3 [root@raclhr-12cR1-N1 ~]# ping raclhr-12cR1-scan PING raclhr-12cR1-scan (192.168.59.164) 56(84) bytes of data. From raclhr-12cR1-N1 (192.168.59.160) icmp_seq=2 Destination Host Unreachable From raclhr-12cR1-N1 (192.168.59.160) icmp_seq=3 Destination Host Unreachable From raclhr-12cR1-N1 (192.168.59.160) icmp_seq=4 Destination Host Unreachable ^C --- raclhr-12cR1-scan ping statistics --- 5 packets transmitted, 0 received, +3 errors, 100% packet loss, time 4501ms pipe 3 [root@raclhr-12cR1-N1 ~]# |

配置NOZEROCONF

vi /etc/sysconfig/network增加以下内容

1 2 | NOZEROCONF=yes |

硬件要求

内存

使用命令查看:# grep MemTotal /proc/meminfo

1 2 3 4 | [root@raclhr-12cR1-N1 ~]# grep MemTotal /proc/meminfo MemTotal: 2046592 kB [root@raclhr-12cR1-N1 ~]# |

Swap空间

| RAM | Swap 空间 |

|---|---|

| 1 GB \~ 2 GB | 1.5倍RAM大小 |

| 2 GB \~ 16 GB | RAM大小 |

| > 32 GB | 16 GB |

使用命令查看:# grep SwapTotal /proc/meminfo

1 2 3 4 | [root@raclhr-12cR1-N1 ~]# grep SwapTotal /proc/meminfo SwapTotal: 2097144 kB [root@raclhr-12cR1-N1 ~]# |

/tmp空间

建议单独建立/tmp文件系统,小麦苗这里用的是逻辑卷,所以比较好扩展。

1 2 3 4 5 6 7 8 9 | [root@raclhr-12cR1-N1 ~]# df -h Filesystem Size Used Avail Use% Mounted on /dev/mapper/vg_rootlhr-Vol00 9.9G 4.9G 4.6G 52% / tmpfs 1000M 72K 1000M 1% /dev/shm /dev/sda1 194M 35M 150M 19% /boot /dev/mapper/vg_rootlhr-Vol01 3.0G 70M 2.8G 3% /tmp /dev/mapper/vg_rootlhr-Vol03 3.0G 69M 2.8G 3% /home .host:/ 331G 229G 102G 70% /mnt/hgfs |

Oracle安装将占用的磁盘空间

本地磁盘:/u01作为下列软件的安装位置

- Oracle Grid Infrastructure software: 6.8GB

- Oracle Enterprise Edition software: 5.3GB

1 2 3 4 5 6 7 8 9 10 11 | vgcreate vg_orasoft /dev/sdb1 /dev/sdb2 /dev/sdb3 lvcreate -n lv_orasoft_u01 -L 20G vg_orasoft mkfs.ext4 /dev/vg_orasoft/lv_orasoft_u01 mkdir /u01 mount /dev/vg_orasoft/lv_orasoft_u01 /u01 cp /etc/fstab /etc/fstab.`date +%Y%m%d` echo "/dev/vg_orasoft/lv_orasoft_u01 /u01 ext4 defaults 0 0" >> /etc/fstab cat /etc/fstab |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 | [root@raclhr-12cR1-N2 ~]# vgcreate vg_orasoft /dev/sdb1 /dev/sdb2 /dev/sdb3 Volume group "vg_orasoft" successfully created [root@raclhr-12cR1-N2 ~]# lvcreate -n lv_orasoft_u01 -L 20G vg_orasoft Logical volume "lv_orasoft_u01" created [root@raclhr-12cR1-N2 ~]# mkfs.ext4 /dev/vg_orasoft/lv_orasoft_u01 mke2fs 1.41.12 (17-May-2010) Filesystem label= OS type: Linux Block size=4096 (log=2) Fragment size=4096 (log=2) Stride=0 blocks, Stripe width=0 blocks 1310720 inodes, 5242880 blocks 262144 blocks (5.00%) reserved for the super user First data block=0 Maximum filesystem blocks=4294967296 160 block groups 32768 blocks per group, 32768 fragments per group 8192 inodes per group Superblock backups stored on blocks: 32768, 98304, 163840, 229376, 294912, 819200, 884736, 1605632, 2654208, 4096000 Writing inode tables: done Creating journal (32768 blocks): done Writing superblocks and filesystem accounting information: done This filesystem will be automatically checked every 39 mounts or 180 days, whichever comes first. Use tune2fs -c or -i to override. [root@raclhr-12cR1-N2 ~]# mkdir /u01 mount /dev/vg_orasoft/lv_orasoft_u01 /u01 [root@raclhr-12cR1-N2 ~]# mount /dev/vg_orasoft/lv_orasoft_u01 /u01 [root@raclhr-12cR1-N2 ~]# cp /etc/fstab /etc/fstab.`date +%Y%m%d` echo "/dev/vg_orasoft/lv_orasoft_u01 /u01 ext4 defaults 0 0" >> /etc/fstab [root@raclhr-12cR1-N2 ~]# echo "/dev/vg_orasoft/lv_orasoft_u01 /u01 ext4 defaults 0 0" >> /etc/fstab [root@raclhr-12cR1-N2 ~]# cat /etc/fstab # # /etc/fstab # Created by anaconda on Sat Jan 14 18:56:24 2017 # # Accessible filesystems, by reference, are maintained under '/dev/disk' # See man pages fstab(5), findfs(8), mount(8) and/or blkid(8) for more info # /dev/mapper/vg_rootlhr-Vol00 / ext4 defaults 1 1 UUID=fccf51c1-2d2f-4152-baac-99ead8cfbc1a /boot ext4 defaults 1 2 /dev/mapper/vg_rootlhr-Vol01 /tmp ext4 defaults 1 2 /dev/mapper/vg_rootlhr-Vol02 swap swap defaults 0 0 tmpfs /dev/shm tmpfs defaults 0 0 devpts /dev/pts devpts gid=5,mode=620 0 0 sysfs /sys sysfs defaults 0 0 proc /proc proc defaults 0 0 /dev/vg_rootlhr/Vol03 /home ext4 defaults 0 0 /dev/vg_orasoft/lv_orasoft_u01 /u01 ext4 defaults 0 0 [root@raclhr-12cR1-N2 ~]# df -h Filesystem Size Used Avail Use% Mounted on /dev/mapper/vg_rootlhr-Vol00 9.9G 4.9G 4.6G 52% / tmpfs 1000M 72K 1000M 1% /dev/shm /dev/sda1 194M 35M 150M 19% /boot /dev/mapper/vg_rootlhr-Vol01 3.0G 70M 2.8G 3% /tmp /dev/mapper/vg_rootlhr-Vol03 3.0G 69M 2.8G 3% /home .host:/ 331G 234G 97G 71% /mnt/hgfs /dev/mapper/vg_orasoft-lv_orasoft_u01 20G 172M 19G 1% /u01 [root@raclhr-12cR1-N2 ~]# vgs VG #PV #LV #SN Attr VSize VFree vg_orasoft 3 1 0 wz--n- 29.99g 9.99g vg_rootlhr 2 4 0 wz--n- 19.80g 1.80g [root@raclhr-12cR1-N2 ~]# lvs LV VG Attr LSize Pool Origin Data% Move Log Cpy%Sync Convert lv_orasoft_u01 vg_orasoft -wi-ao---- 20.00g Vol00 vg_rootlhr -wi-ao---- 10.00g Vol01 vg_rootlhr -wi-ao---- 3.00g Vol02 vg_rootlhr -wi-ao---- 2.00g Vol03 vg_rootlhr -wi-ao---- 3.00g [root@raclhr-12cR1-N2 ~]# pvs PV VG Fmt Attr PSize PFree /dev/sda2 vg_rootlhr lvm2 a-- 10.00g 0 /dev/sda3 vg_rootlhr lvm2 a-- 9.80g 1.80g /dev/sdb1 vg_orasoft lvm2 a-- 10.00g 0 /dev/sdb10 lvm2 a-- 10.00g 10.00g /dev/sdb11 lvm2 a-- 9.99g 9.99g /dev/sdb2 vg_orasoft lvm2 a-- 10.00g 0 /dev/sdb3 vg_orasoft lvm2 a-- 10.00g 9.99g /dev/sdb5 lvm2 a-- 10.00g 10.00g /dev/sdb6 lvm2 a-- 10.00g 10.00g /dev/sdb7 lvm2 a-- 10.00g 10.00g /dev/sdb8 lvm2 a-- 10.00g 10.00g /dev/sdb9 lvm2 a-- 10.00g 10.00g [root@raclhr-12cR1-N2 ~]# |

添加组和用户

添加oracle和grid用户

从Oracle 11gR2开始,安装RAC需要安装 Oracle Grid Infrastructure 软件、Oracle数据库软件,其中Grid软件等同于Oracle 10g的Clusterware集群件。Oracle建议以不同的用户分别安装Grid Infrastructure软件、Oracle数据库软件。一般以grid用户安装Grid Infrastructure,oracle用户安装Oracle数据库软件。grid、oracle用户需要属于不同的用户组。在配置RAC时,还要求这两个用户在RAC的不同节点上uid、gid要一致。

- 创建5个组dba,oinstall分别做为OSDBA组,Oracle Inventory组;asmdba,asmoper,asmadmin作为ASM磁盘管理组。

- 创建2个用户oracle, grid,oracle属于dba,oinstall,oraoper,asmdba组,grid属于asmadmin,asmdba,asmoper,oraoper,dba;oinstall做为用户的primary group。

- 上述创建的所有用户和组在每台机器上的名称和对应ID号,口令,以及属组关系和顺序必须保持一致。grid和oracle密码不过期。

创建组:

1 2 3 4 5 6 7 | groupadd -g 1000 oinstall groupadd -g 1001 dba groupadd -g 1002 oper groupadd -g 1003 asmadmin groupadd -g 1004 asmdba groupadd -g 1005 asmoper |

创建grid和oracle用户:

1 2 3 | useradd -u 1000 -g oinstall -G asmadmin,asmdba,asmoper,dba -d /home/grid -m grid useradd -u 1001 -g oinstall -G dba,asmdba -d /home/oracle -m oracle |

如果oracle用户已经存在,则:

1 2 | usermod -g oinstall -G dba,asmdba –u 1001 oracle |

为oracle和grid用户设密码:

1 2 3 | passwd oracle passwd grid |

设置密码永不过期:

1 2 3 4 5 | chage -M -1 oracle chage -M -1 grid chage -l oracle chage -l grid |

检查: